Fairness and Explainability with SageMaker Clarify

This notebook’s CI test result for us-west-2 is as follows. CI test results in other regions can be found at the end of the notebook.

Runtime

This notebook takes approximately 30 minutes to run.

Contents

Overview

Amazon SageMaker Clarify helps improve your machine learning models by detecting potential bias and helping explain how these models make predictions. The fairness and explainability functionality provided by SageMaker Clarify takes a step towards enabling AWS customers to build trustworthy and understandable machine learning models. The product comes with the tools to help you with the following tasks.

Measure biases that can occur during each stage of the ML lifecycle (data collection, model training and tuning, and monitoring of ML models deployed for inference).

Generate model governance reports targeting risk and compliance teams and external regulators.

Provide explanations of the data, models, and monitoring used to assess predictions.

In doing so, the notebook first trains a SageMaker XGBoost model using training dataset, then use SageMaker Clarify to analyze a testing dataset in CSV format. SageMaker Clarify also supports analyzing dataset in SageMaker JSON Lines dense format, which is illustrated in another notebook.

Prerequisites and Data

Initialize SageMaker

[ ]:

import sys

[ ]:

# update boto3 and sagemaker to ensure latest SDK version

!{sys.executable} -m pip uninstall -y sagemaker

!{sys.executable} -m pip install --upgrade pip

!{sys.executable} -m pip install --upgrade boto3 --no-cache-dir

!{sys.executable} -m pip install --upgrade sagemaker==2.123.0 --no-cache-dir

[ ]:

from sagemaker import Session

session = Session()

default_bucket = session.default_bucket()

default_prefix = "sagemaker/DEMO-sagemaker-clarify"

region = session.boto_region_name

# Define IAM role

from sagemaker import get_execution_role

import pandas as pd

import numpy as np

import os

import boto3

from datetime import datetime

role = get_execution_role()

s3_client = boto3.client("s3")

Download data

Data Source: https://archive.ics.uci.edu/ml/machine-learning-databases/adult/

Let’s download the data and save it in the local folder with the name adult.data and adult.test from UCI repository\(^{[2]}\).

\(^{[2]}\)Dua Dheeru, and Efi Karra Taniskidou. “UCI Machine Learning Repository”. Irvine, CA: University of California, School of Information and Computer Science (2017).

[ ]:

adult_columns = [

"Age",

"Workclass",

"fnlwgt",

"Education",

"Education-Num",

"Marital Status",

"Occupation",

"Relationship",

"Ethnic group",

"Sex",

"Capital Gain",

"Capital Loss",

"Hours per week",

"Country",

"Target",

]

if not os.path.isfile("adult.data"):

s3_client.download_file(

f"sagemaker-example-files-prod-{session.boto_region_name}",

"datasets/tabular/uci_adult/adult.data",

"adult.data",

)

print("adult.data saved!")

else:

print("adult.data already on disk.")

if not os.path.isfile("adult.test"):

s3_client.download_file(

f"sagemaker-example-files-prod-{session.boto_region_name}",

"datasets/tabular/uci_adult/adult.test",

"adult.test",

)

print("adult.test saved!")

else:

print("adult.test already on disk.")

Loading the data: Adult Dataset

From the UCI repository of machine learning datasets, this database contains 14 features concerning demographic characteristics of 45,222 rows (32,561 for training and 12,661 for testing). The task is to predict whether a person has a yearly income that is more or less than $50,000.

Here are the features and their possible values: 1. Age: continuous. 1. Workclass: Private, Self-emp-not-inc, Self-emp-inc, Federal-gov, Local-gov, State-gov, Without-pay, Never-worked. 1. Fnlwgt: continuous (the number of people the census takers believe that observation represents). 1. Education: Bachelors, Some-college, 11th, HS-grad, Prof-school, Assoc-acdm, Assoc-voc, 9th, 7th-8th, 12th, Masters, 1st-4th, 10th, Doctorate, 5th-6th, Preschool. 1. Education-num: continuous. 1. Marital-status: Married-civ-spouse, Divorced, Never-married, Separated, Widowed, Married-spouse-absent, Married-AF-spouse. 1. Occupation: Tech-support, Craft-repair, Other-service, Sales, Exec-managerial, Prof-specialty, Handlers-cleaners, Machine-op-inspct, Adm-clerical, Farming-fishing, Transport-moving, Priv-house-serv, Protective-serv, Armed-Forces. 1. Relationship: Wife, Own-child, Husband, Not-in-family, Other-relative, Unmarried. 1. Ethnic group: White, Asian-Pac-Islander, Amer-Indian-Eskimo, Other, Black. 1. Sex: Female, Male. * Note: this data is extracted from the 1994 Census and enforces a binary option on Sex 1. Capital-gain: continuous. 1. Capital-loss: continuous. 1. Hours-per-week: continuous. 1. Native-country: United-States, Cambodia, England, Puerto-Rico, Canada, Germany, Outlying-US(Guam-USVI-etc), India, Japan, Greece, South, China, Cuba, Iran, Honduras, Philippines, Italy, Poland, Jamaica, Vietnam, Mexico, Portugal, Ireland, France, Dominican-Republic, Laos, Ecuador, Taiwan, Haiti, Columbia, Hungary, Guatemala, Nicaragua, Scotland, Thailand, Yugoslavia, El-Salvador, Trinadad&Tobago, Peru, Hong, Holand-Netherlands.

[ ]:

import os

from sagemaker.session import Session

from sagemaker import get_execution_role

from sagemaker.experiments.run import Run

from sagemaker.utils import unique_name_from_base

role = get_execution_role()

sagemaker_session = Session()

experiment_name = "clarify-experiment-{}".format(datetime.now().strftime("%d-%m-%Y-%H-%M-%S"))

[ ]:

training_data = pd.read_csv(

"adult.data", names=adult_columns, sep=r"\s*,\s*", engine="python", na_values="?"

).dropna()

testing_data = pd.read_csv(

"adult.test", names=adult_columns, sep=r"\s*,\s*", engine="python", na_values="?", skiprows=1

).dropna()

training_data.head()

Data inspection

[ ]:

training_data["Sex"].value_counts().sort_values().plot(kind="bar", title="Counts of Sex", rot=0)

[ ]:

training_data["Sex"].where(training_data["Target"] == ">50K").value_counts().sort_values().plot(

kind="bar", title="Counts of Sex earning >$50K", rot=0

)

Encode and Upload the Dataset

Here we encode the training and test data. Encoding input data is not necessary for SageMaker Clarify, but is necessary for the model.

[ ]:

from sklearn import preprocessing

def number_encode_features(df):

result = df.copy()

encoders = {}

for column in result.columns:

if result.dtypes[column] == object:

encoders[column] = preprocessing.LabelEncoder()

result[column] = encoders[column].fit_transform(result[column].fillna("None"))

return result, encoders

training_data = pd.concat([training_data["Target"], training_data.drop(["Target"], axis=1)], axis=1)

training_data, _ = number_encode_features(training_data)

training_data.to_csv("train_data.csv", index=False, header=False)

testing_data, _ = number_encode_features(testing_data)

test_features = testing_data.drop(["Target"], axis=1)

test_target = testing_data["Target"]

test_features.to_csv("test_features.csv", index=False, header=False)

A quick note about our encoding: the “Female” Sex value has been encoded as 0 and “Male” as 1.

[ ]:

training_data.head()

Lastly, let’s upload the data to S3.

[ ]:

from sagemaker.s3 import S3Uploader

from sagemaker.inputs import TrainingInput

train_uri = S3Uploader.upload("train_data.csv", "s3://{}/{}".format(default_bucket, default_prefix))

train_input = TrainingInput(train_uri, content_type="text/csv")

test_uri = S3Uploader.upload(

"test_features.csv", "s3://{}/{}".format(default_bucket, default_prefix)

)

Train XGBoost Model

Train Model

Since our focus is on understanding how to use SageMaker Clarify, we keep it simple by using a standard XGBoost model.

[ ]:

from sagemaker.image_uris import retrieve

from sagemaker.estimator import Estimator

container = retrieve("xgboost", region, version="1.2-1")

xgb = Estimator(

container,

role,

instance_count=1,

instance_type="ml.m5.xlarge",

disable_profiler=True,

sagemaker_session=session,

)

xgb.set_hyperparameters(

max_depth=5,

eta=0.2,

gamma=4,

min_child_weight=6,

subsample=0.8,

objective="binary:logistic",

num_round=800,

)

with Run(

experiment_name=experiment_name,

run_name="model-train-only", # create a experiment run with only the model training on it

sagemaker_session=sagemaker_session,

) as run:

xgb.fit({"train": train_input}, logs=False)

Create Model

Here we create the SageMaker model.

[ ]:

model_name = "DEMO-clarify-model-{}".format(datetime.now().strftime("%d-%m-%Y-%H-%M-%S"))

model = xgb.create_model(name=model_name)

container_def = model.prepare_container_def()

session.create_model(model_name, role, container_def)

Amazon SageMaker Clarify

Now that you have your model set up, let’s say hello to SageMaker Clarify!

[ ]:

from sagemaker import clarify

clarify_processor = clarify.SageMakerClarifyProcessor(

role=role, instance_count=1, instance_type="ml.m5.xlarge", sagemaker_session=session

)

Detecting Bias

SageMaker Clarify helps you detect possible pre- and post-training biases using a variety of metrics. #### Writing DataConfig and ModelConfig A DataConfig object communicates some basic information about data I/O to SageMaker Clarify. We specify where to find the input dataset, where to store the output, the target column (label), the header names, and the dataset type.

[ ]:

bias_report_output_path = "s3://{}/{}/clarify-bias".format(default_bucket, default_prefix)

bias_data_config = clarify.DataConfig(

s3_data_input_path=train_uri,

s3_output_path=bias_report_output_path,

label="Target",

headers=training_data.columns.to_list(),

dataset_type="text/csv",

)

A ModelConfig object communicates information about your trained model. To avoid additional traffic to your production models, SageMaker Clarify sets up and tears down a dedicated endpoint when processing. * instance_type and instance_count specify your preferred instance type and instance count used to run your model on during SageMaker Clarify’s processing. The testing dataset is small so a single standard instance is good enough to run this example. If your have a large complex

dataset, you may want to use a better instance type to speed up, or add more instances to enable Spark parallelization. * accept_type denotes the endpoint response payload format, and content_type denotes the payload format of request to the endpoint.

[ ]:

model_config = clarify.ModelConfig(

model_name=model_name,

instance_type="ml.m5.xlarge",

instance_count=1,

accept_type="text/csv",

content_type="text/csv",

)

A ModelPredictedLabelConfig provides information on the format of your predictions. XGBoost model outputs probabilities of samples, so SageMaker Clarify invokes the endpoint then uses probability_threshold to convert the probability to binary labels for bias analysis. Prediction above the threshold is interpreted as label value 1 and below or equal as label value 0.

[ ]:

predictions_config = clarify.ModelPredictedLabelConfig(probability_threshold=0.8)

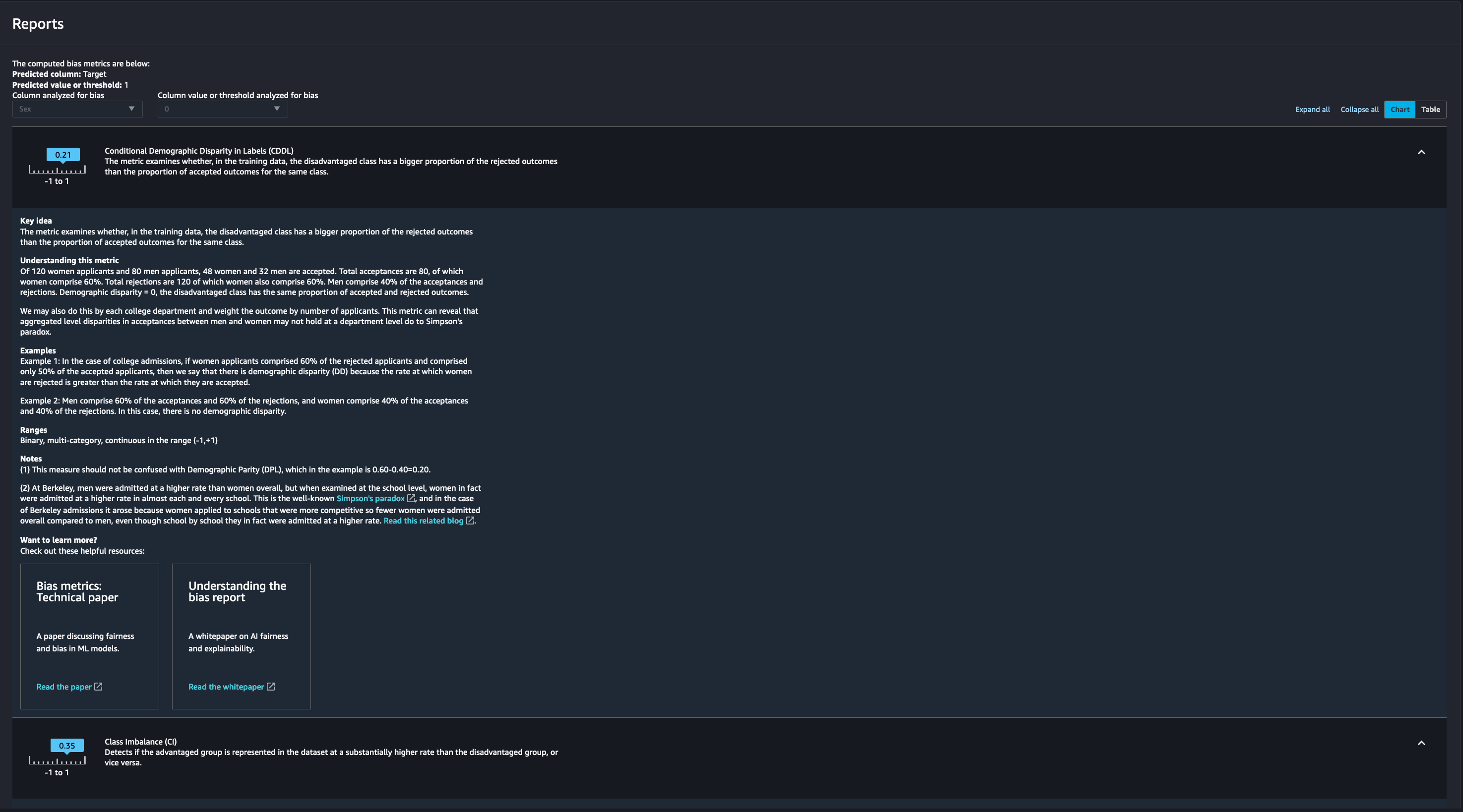

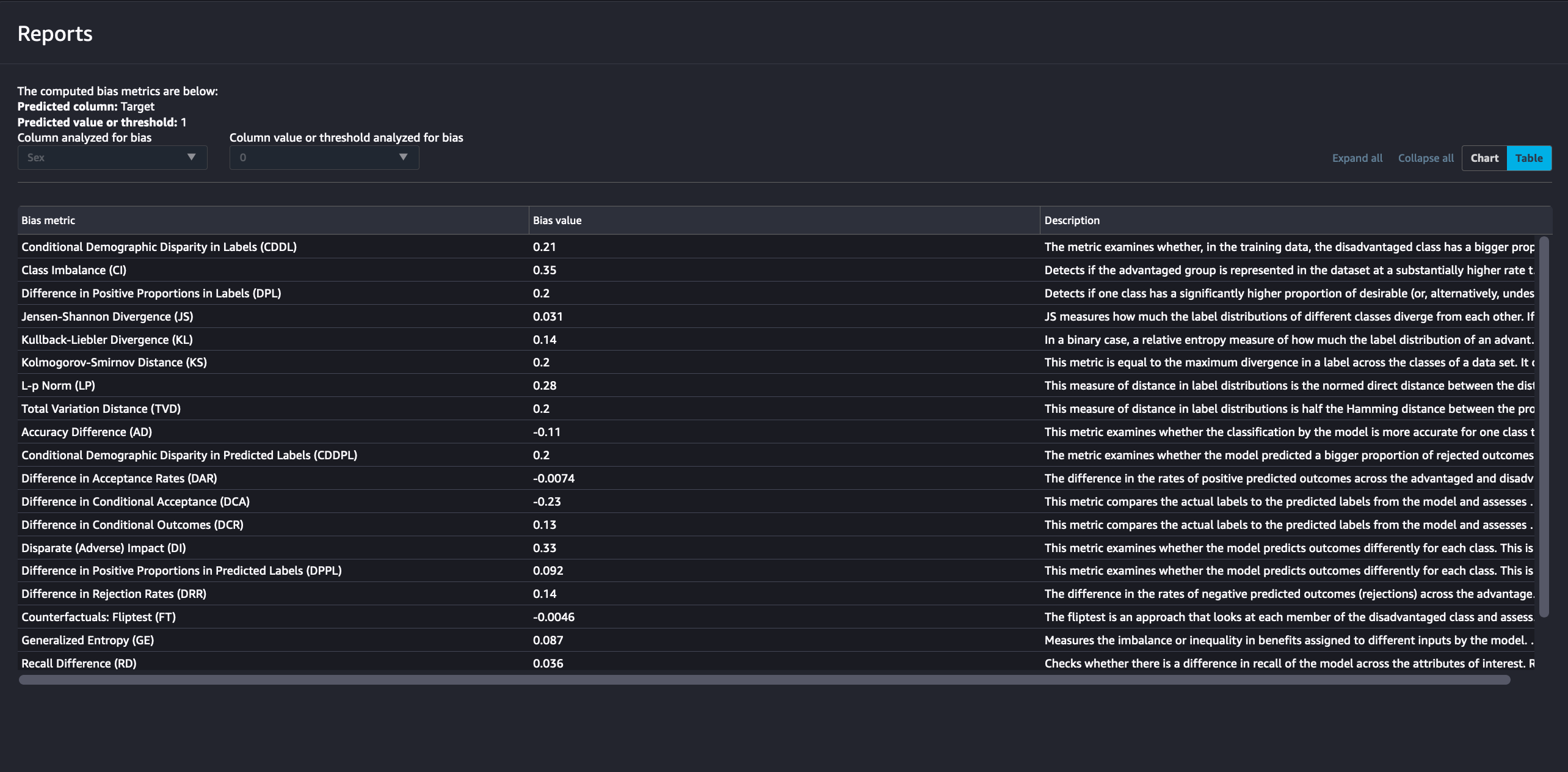

Writing BiasConfig

SageMaker Clarify also needs information on what the sensitive columns (facets) are, what the sensitive features (facet_values_or_threshold) may be, and what the desirable outcomes are (label_values_or_threshold). SageMaker Clarify can handle both categorical and continuous data for facet_values_or_threshold and for label_values_or_threshold. In this case we are using categorical data.

We specify this information in the BiasConfig API. Here that the positive outcome is earning >$50,000, Sex is a sensitive category, and Female respondents are the sensitive group. group_name is used to form subgroups for the measurement of Conditional Demographic Disparity in Labels (CDDL) and Conditional Demographic Disparity in Predicted Labels (CDDPL) with regards to Simpson’s paradox.

[ ]:

bias_config = clarify.BiasConfig(

label_values_or_threshold=[1], facet_name="Sex", facet_values_or_threshold=[0], group_name="Age"

)

Pre-training Bias

Bias can be present in your data before any model training occurs. Inspecting your data for bias before training begins can help detect any data collection gaps, inform your feature engineering, and help you understand what societal biases the data may reflect.

Computing pre-training bias metrics does not require a trained model.

Post-training Bias

Computing post-training bias metrics does require a trained model.

Unbiased training data (as determined by concepts of fairness measured by bias metric) may still result in biased model predictions after training. Whether this occurs depends on several factors including hyperparameter choices.

You can run these options separately with run_pre_training_bias() and run_post_training_bias() or at the same time with run_bias() as shown below.

[ ]:

with Run(

experiment_name=experiment_name,

run_name="bias-only", # create a experiment run with only the bias analysis on it

sagemaker_session=sagemaker_session,

) as run:

clarify_processor.run_bias(

data_config=bias_data_config,

bias_config=bias_config,

model_config=model_config,

model_predicted_label_config=predictions_config,

pre_training_methods="all",

post_training_methods="all",

)

Viewing the Bias Report

SageMaker Experiments enables you to view the bias report in SageMaker Studio under the experiments tab. The report is made available under your experiment run page in the bias reports tab

Each bias metric has detailed explanations with examples that you can explore.

You could also summarize the results in a handy table!

If you’re not a Studio user yet, you can access the bias report in pdf, html and ipynb formats in the following S3 bucket:

[ ]:

bias_report_output_path

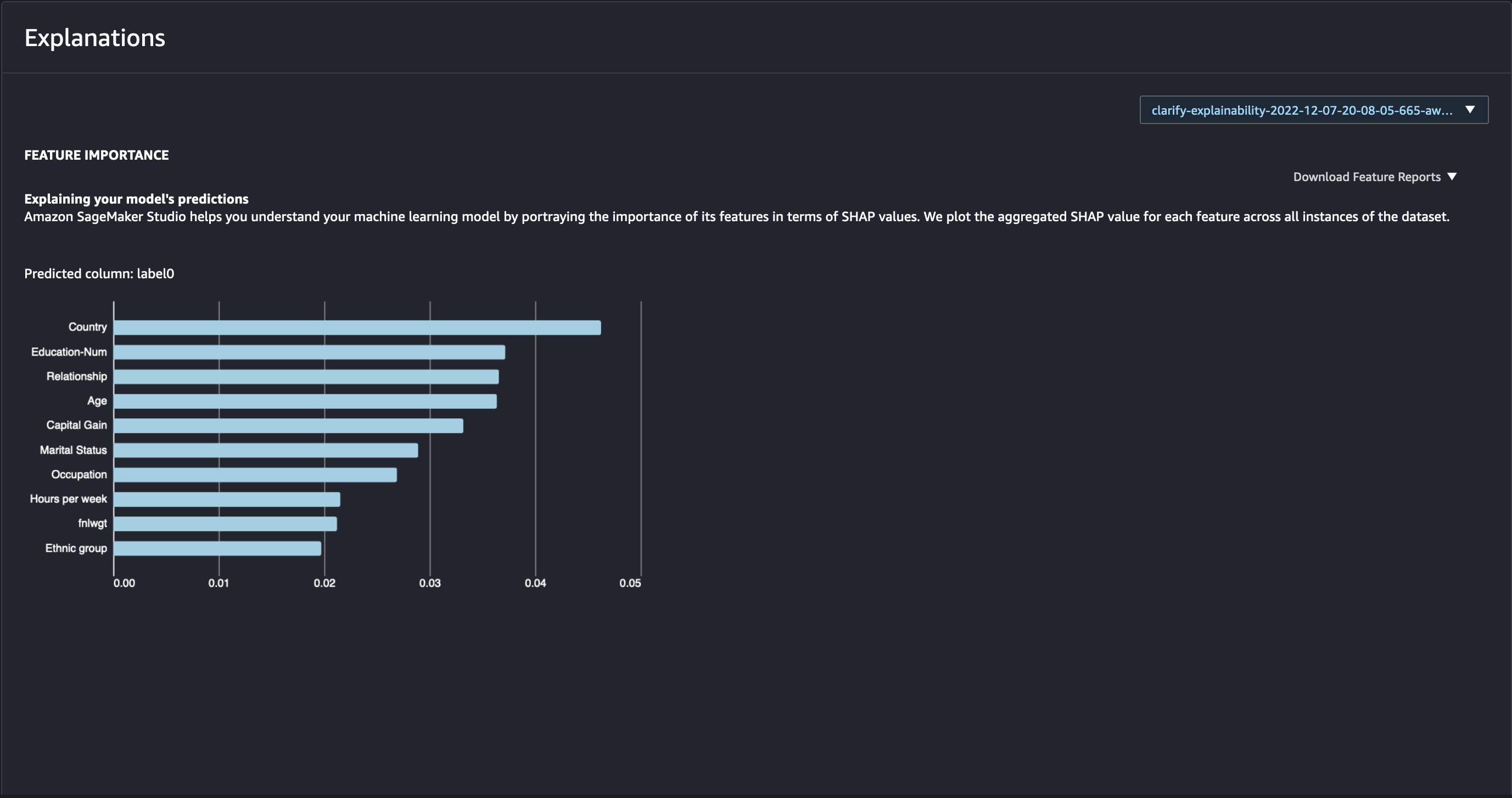

Explaining Predictions

There are expanding business needs and legislative regulations that require explanations of why a model made the decision it did. SageMaker Clarify uses SHAP to explain the contribution that each input feature makes to the final decision.

Kernel SHAP algorithm requires a baseline (also known as background dataset). If not provided, a baseline is calculated automatically by SageMaker Clarify using K-means or K-prototypes in the input dataset. Baseline dataset type shall be the same as dataset_type of DataConfig, and baseline samples shall only include features. By definition, baseline should either be a S3 URI to the baseline dataset file, or an in-place list of samples. In this case we chose the latter, and put the

first sample of the test dataset to the list.

[ ]:

shap_config = clarify.SHAPConfig(

baseline=[test_features.iloc[0].values.tolist()],

num_samples=15,

agg_method="mean_abs",

save_local_shap_values=True,

)

explainability_output_path = "s3://{}/{}/clarify-explainability".format(

default_bucket, default_prefix

)

explainability_data_config = clarify.DataConfig(

s3_data_input_path=train_uri,

s3_output_path=explainability_output_path,

label="Target",

headers=training_data.columns.to_list(),

dataset_type="text/csv",

)

[ ]:

with Run(

experiment_name=experiment_name,

run_name="explainabilit-only", # create a experiment run with only the model explainabilit on it

sagemaker_session=sagemaker_session,

) as run:

clarify_processor.run_explainability(

data_config=explainability_data_config,

model_config=model_config,

explainability_config=shap_config,

)

Viewing the Explainability Report

As with the bias report, SageMaker Experiments enables you to view the explainability report in Studio under the experiments tab. The report is made available under your experiment run page in the explanations tab

The Model Insights tab contains direct links to the report and model insights.

If you’re not a Studio user yet, as with the Bias Report, you can access this report at the following S3 bucket.

[ ]:

from __future__ import absolute_import

Combining model training, bias analysis and model explainability into one experiment report

Up until now, you have created individual SageMaker Experiment runs for each task. SageMaker Experiments will then organize each step of your model train process in its own experiment report.

With the new SageMaker SDK, you can now organize your model training, bias analysis and explainability report in the same overview report by running the SageMaker training and processing jobs in the same experiment run. In this case, your experiment run will include information of your model metrics, bias reports, explanations as well as all the provided parameters, inputs and outputs for the model training, bias and explainability jobs.

[ ]:

with Run(

experiment_name=experiment_name,

run_name="combined-report",

sagemaker_session=sagemaker_session,

) as run: # model training, bias analysis and explainability reports created in the same experiment run

xgb.fit({"train": train_input}, logs=False)

clarify_processor.run_bias(

data_config=bias_data_config,

bias_config=bias_config,

model_config=model_config,

model_predicted_label_config=predictions_config,

pre_training_methods="all",

post_training_methods="all",

)

clarify_processor.run_explainability(

data_config=explainability_data_config,

model_config=model_config,

explainability_config=shap_config,

)

[ ]:

explainability_output_path

Analysis of local explanations

It is possible to visualize the the local explanations for single examples in your dataset. You can use the obtained results from running Kernel SHAP algorithm for global explanations.

You can simply load the local explanations stored in your output path, and visualize the explanation (i.e., the impact that the single features have on the prediction of your model) for any single example.

[ ]:

local_explanations_out = pd.read_csv(explainability_output_path + "/explanations_shap/out.csv")

feature_names = [str.replace(c, "_label0", "") for c in local_explanations_out.columns.to_series()]

local_explanations_out.columns = feature_names

selected_example = 111

print(

"Example number:",

selected_example,

"\nwith model prediction:",

sum(local_explanations_out.iloc[selected_example]) > 0,

)

print("\nFeature values -- Label", training_data.iloc[selected_example])

local_explanations_out.iloc[selected_example].plot(

kind="bar", title="Local explanation for the example number " + str(selected_example), rot=90

)

Notebook CI Test Results

This notebook was tested in multiple regions. The test results are as follows, except for us-west-2 which is shown at the top of the notebook.